How to Fix Rate Limit Reached Error in DeepSeek?

Are you frustrated because you keep getting the “Rate Limit Reached” error in DeepSeek exactly when you need it the most, like in the middle of generating content or making batch API calls? Do you feel like your DeepSeek integration is falling apart, slowing down, and sometimes completely stopping, leaving you guessing what’s wrong?

This error can block your progress, kill your workflow, and waste a lot of your time. But here’s the good news: you don’t have to live with that error. As a little SEO-whiz kid who also loves coding, I’ve found smart, proven ways to fix rate limit reached error in DeepSeek.

In this guide, I’ll walk you through why it happens, exactly how to handle it (with code!), and how to build your system so it avoids this issue in the future.

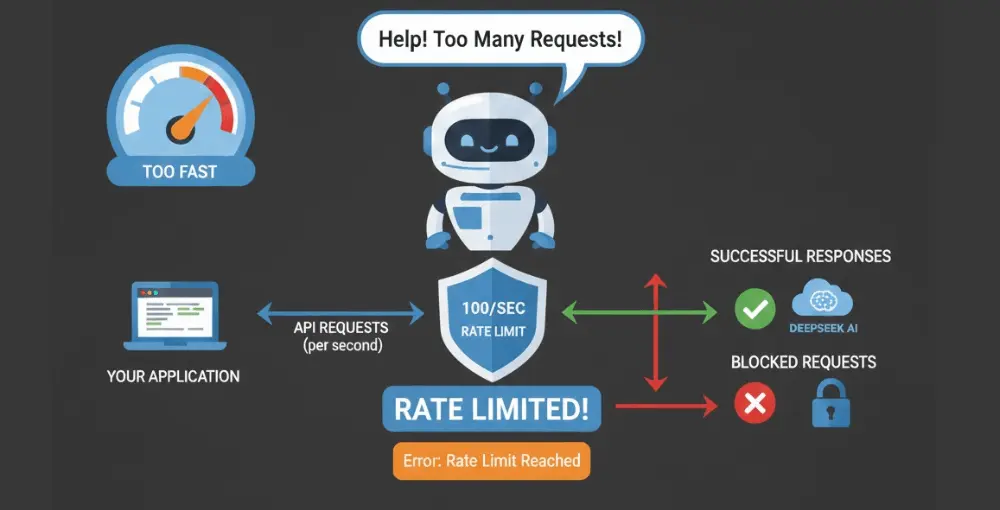

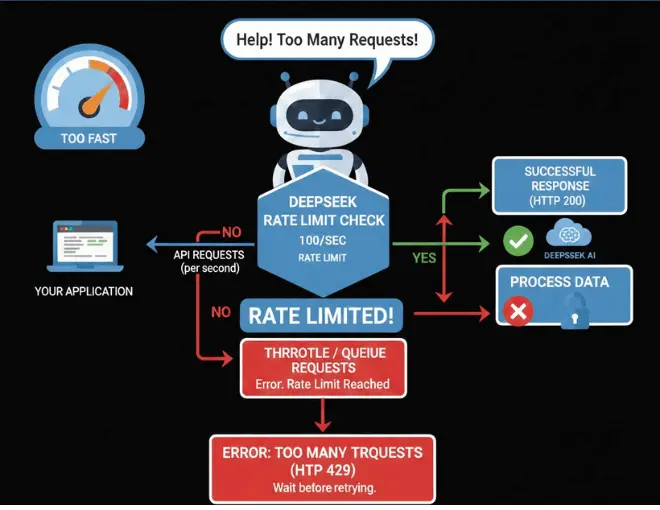

Why the “Rate Limit Reached” Error Happens in DeepSeek?

To fix something, you’ve got to understand it first. Here are key reasons why DeepSeek might throw a rate limit error:

How to Fix Rate Limit Reached Error in DeepSeek?

Now, let’s get to the solutions, step by step, from simpler fixes to more advanced system design.

1. Implement Slow-Down / Throttling (Limit Request Rate)

- Introduce a fixed delay between your API calls.

- This prevents sudden bursts of requests that trigger throttling.

Example (Python):

import time

import requests

def call_deepseek(url, headers, payload):

response = requests.post(url, headers=headers, json=payload)

return response

api_url = "https://api.deepseek.com/v1/chat/completions"

headers = {

"Authorization": "Bearer YOUR_API_KEY",

"Content-Type": "application/json"

}

payload = {

"model": "deepseek-chat",

"messages": [{"role": "user", "content": "Hello, DeepSeek!"}]

}

for i in range(10):

resp = call_deepseek(api_url, headers, payload)

print(resp.status_code, resp.text[:100])

time.sleep(1) # wait 1 second between calls

This helps you “pace” your usage so you’re less likely to hit the limit.

2. Exponential Backoff + Retry Logic

When you do hit a 429 (“Too Many Requests”), you should retry — but carefully:

- Use exponential backoff: wait a little, then more, then more, for each retry.

- Use max retries so you’re not stuck in an infinite loop.

Example (Python):

import time

import requests

def call_with_backoff(url, headers, payload, max_retries=5):

base_delay = 1

for attempt in range(max_retries):

resp = requests.post(url, headers=headers, json=payload)

if resp.status_code == 200:

return resp.json()

elif resp.status_code == 429:

# DeepSeek may or may not send `Retry-After` header

retry_after = resp.headers.get("Retry-After")

if retry_after:

wait = int(retry_after)

else:

wait = base_delay * (2 ** attempt)

print(f"429 received. Waiting {wait}s before retry #{attempt + 1}")

time.sleep(wait)

else:

resp.raise_for_status()

raise Exception("Exceeded maximum retries for DeepSeek API")

# Usage

result = call_with_backoff(api_url, headers, payload)

print(result)

Example (Node.js / JavaScript):

const axios = require("axios");

async function callDeepseekWithBackoff(url, headers, payload, maxRetries = 5) {

const baseDelay = 1000; // 1 second

for (let attempt = 0; attempt < maxRetries; attempt++) {

try {

const response = await axios.post(url, payload, { headers });

return response.data;

} catch (error) {

if (error.response?.status === 429) {

const retryAfter = error.response.headers["retry-after"];

const waitTime = retryAfter

? parseInt(retryAfter) * 1000

: baseDelay * Math.pow(2, attempt);

console.log(`429 received. Waiting ${waitTime / 1000}s (retry ${attempt + 1})`);

await new Promise((r) => setTimeout(r, waitTime));

} else {

throw error;

}

}

}

throw new Error("Exceeded max retries for DeepSeek API");

}

// Usage

(async () => {

const apiUrl = "https://api.deepseek.com/v1/chat/completions";

const headers = {

"Authorization": "Bearer YOUR_API_KEY",

"Content-Type": "application/json",

};

const payload = {

model: "deepseek-chat",

messages: [{ role: "user", content: "Hello from Node!" }],

};

const data = await callDeepseekWithBackoff(apiUrl, headers, payload);

console.log(data);

})();

3. Cache API Responses

If your application makes repeated calls with the same parameters, caching helps a lot:

- Use a simple in-memory cache (for small apps) or Redis/Memcached (for larger ones).

- Before calling DeepSeek, check the cache.

- If data exists, return that instead of hitting the API again.

- Set a TTL (time-to-live) for cached items so you don’t serve stale data forever.

Example (Python with a dict cache):

import time

import requests

cache = {}

def get_from_deepseek(url, headers, payload, ttl=60):

key = (url, tuple(payload["messages"]))

now = time.time()

# If in cache and not expired

if key in cache and now - cache[key]["time"] < ttl:

return cache[key]["response"]

# Otherwise call API

resp = requests.post(url, headers=headers, json=payload)

resp_json = resp.json()

cache[key] = {"response": resp_json, "time": now}

return resp_json

This reduces repeated calls and helps avoid hitting rate limits.

4. Monitor and Handle Keep-Alive / Streaming Behavior

Because DeepSeek may return empty lines or keep-alive lines when the server is under load, you should handle them properly.

For example, if you’re reading raw HTTP response lines:

- Skip blank lines or “: keep-alive” lines.

- Don’t treat them as final errors, just wait for the JSON body.

This ensures you don’t crash or mis-handle long-delayed responses.

5. Queue / Rate-Controlled Request System

If you have many requests (from many users or background jobs), consider building a queue system:

- Use a task queue (e.g., Celery in Python, Bull / Bee-Queue in Node.js).

- Enqueue your DeepSeek requests rather than calling immediately.

- Process them at a controlled rate (e.g., one request every 500ms, or whatever your safe interval is).

- Use a concurrency control mechanism so you don’t overload DeepSeek.

This helps smooth out spikes and makes sure you’re not sending bursts that cause “rate limit reached” errors.

6. Circuit Breaker Pattern

Implement a circuit breaker:

- If your system detects too many 429 errors in a short time, “open” the circuit: stop making new DeepSeek calls temporarily.

- Wait for a cool-down period, then try again.

- This prevents cascading failures where your system just keeps sending failing requests.

7. Ask DeepSeek Support for Help

If all the strategies above don’t fully solve your problem, it’s worth contacting DeepSeek’s support:

- Explain how often you call, how many concurrent calls, and what your backoff strategy is.

- Share logs or error snippets (especially 429s).

- Ask if they can suggest a better usage pattern or even special quota for your use case.

Important to Know

According to DeepSeek’s FAQ, they do not currently support manually increasing the dynamic rate limit for individual accounts.

8. Consider Alternative Providers Temporarily

If you’re constantly hitting deep limits and you need to keep your app running:

- Use a fallback API (like OpenAI or other LLM providers) during high-traffic times.

- This isn’t a “fix” for DeepSeek’s rate limit, but a practical workaround to avoid downtime.

- Some guides even suggest switching temporarily to different providers when DeepSeek is busy.

Common Mistakes to Avoid

When trying to fix the rate limit reached error, here are some common pitfalls:

Why These Fixes Make Your Integration Stronger (Expert View)

- Implementing throttling + backoff makes your app polite to DeepSeek’s servers, reducing rejections.

- Caching saves you from unnecessary calls and speeds up your app.

- Queueing and circuit breaker give you control, so you don’t overwhelm the API or crash your own system.

- Retry logic ensures that temporary rate-limit errors don’t permanently block you.

- Monitoring and logging make you smarter: you learn when and how rate limits hit, and you can improve.

Conclusion

Getting the “Rate Limit Reached” error in DeepSeek can be super annoying, but with the right strategy, you can massively reduce or even eliminate it. Use a combination of throttling, exponential backoff, caching, queueing, and smart error handling to make your DeepSeek integration reliable and efficient.